StackNet 1.1: Decentralized AI Task Execution

Abstract

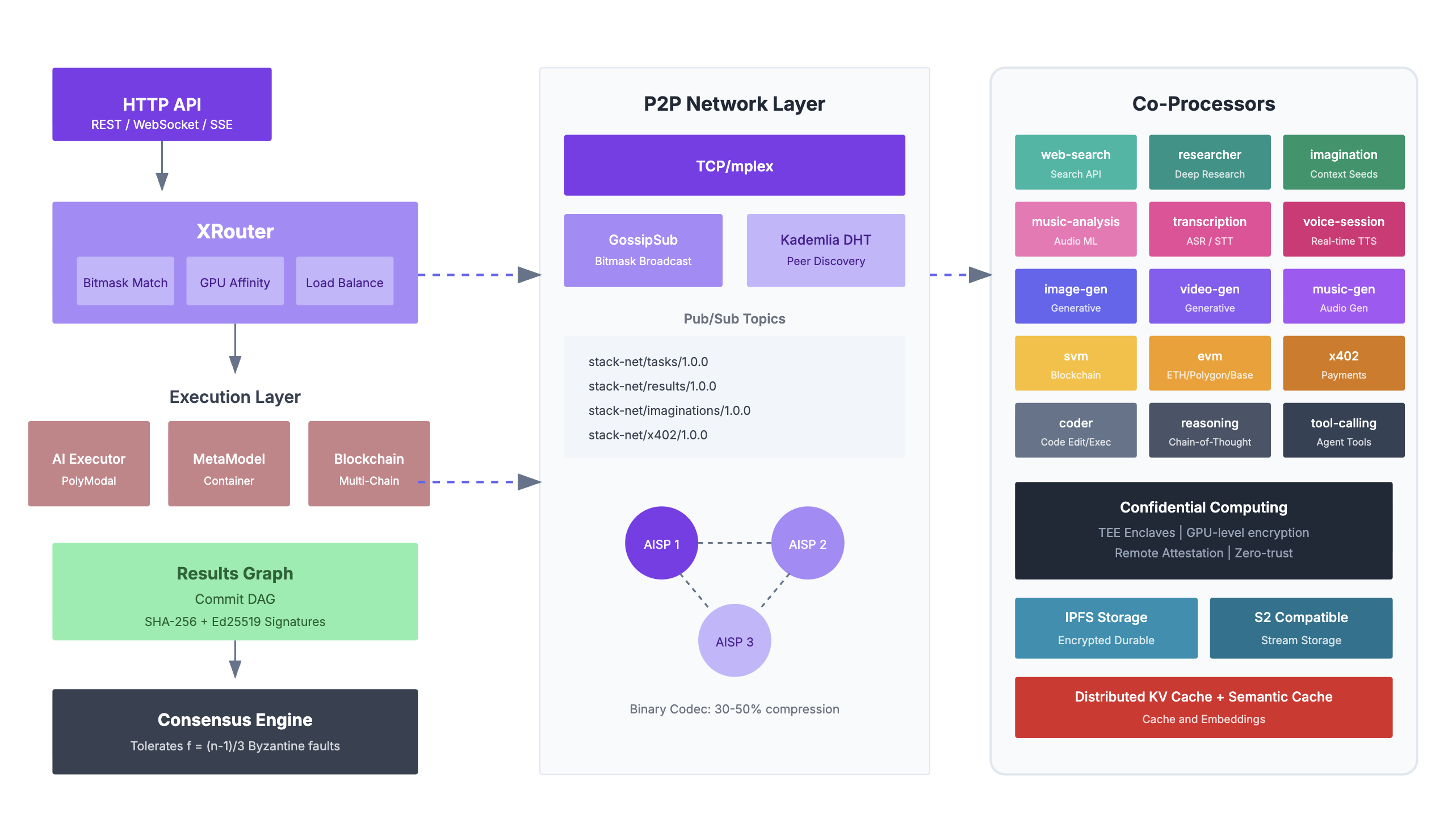

We present StackNet, a decentralized peer-to-peer task execution network designed for confidential trustless AI. StackNet is PolyModal, providing unified routing and execution across the full spectrum of AI workloads: text inference, vision understanding, code generation, text-to-speech (TTS), speech-to-text (STT), automatic speech recognition (ASR), instruction following, multi-step reasoning, media generation, and tool calling. As foundation models proliferate with varying capabilities, costs, and performance characteristics, the challenge of optimally routing requests across heterogeneous compute resources while preserving privacy and enabling seamless monetization for compute operation has become paramount. StackNet addresses this through a novel architecture that combines consensus, confidential computing enclaves, an intelligent PolyModal routing system, and adaptive payment rails for compute resources.

Central to StackNet is the XRouter, an intelligent routing system that dynamically selects the most suitable model for each query based on task complexity, cost constraints, and performance requirements. The router implements over 9 distinct routing strategies organized into three categories: stateless routing, tool routing (agent), and session routers that adapt to ongoing contexts. These strategies encompass techniques including sematic caching, matrix factorization, KNN-similarity matching, SVM classification, graph-based routing for dependency resolution and Elo/BERT based signaling.

StackNet introduces x402 payment integration for trustless compute monetization, enabling token-use based-execution billing where task submission is settled, user to network, network to agent, agent to operator, operator to dataset, attributing credit and each resource. This eliminates the need for centralized billing infrastructure while ensuring atomic settlement—nodes only execute tasks upon verified payment, and clients only pay for successfully completed work. The protocol supports stablecoin payments across multiple chains with sub-second settlement finality.

For privacy-sensitive workloads, StackNet leverages confidential computing through hardware (GPU Level) encrypted enclaves Trusted Execution Environments (TEEs) that process data within hardware-isolated enclaves. This ensures that neither the node operator nor other network participants can observe the plaintext inputs, model weights, or outputs during inference. Remote attestation provides cryptographic proof that computation occurred within a genuine secure enclave running unmodified code, enabling verifiable private AI without trusting any single party.

The architecture extends through a co-processor framework that enables specialized execution units to handle domain-specific tasks. Co-processors include the Arena co-processor for competitive model evaluation and ranking (used by XRouter), the MetaStream co-processor for real-time metadata aggregation, the Tool co-processor for orchestrating MetaModel server invocations, the agent co-processor for managing autonomous agent lifecycles, and the Points co-processor for tracking reputation and reward distributions. Each co-processor operates as an independent module that can be composed into complex execution pipelines.

StackNet enables any compute operator to become an AI Service Provider (AISP) through a streamlined onboarding process. Operators obtain a cryptographic key from the network registry, load the StackNet server runtime on their infrastructure, and connect their key to the network's control plane. Once authenticated, the key acts as the operator's identity and authorization credential, enabling the control plane to manage all aspects of the node's participation. This includes coordination of task assignment and load distribution across the operator's resources, model management for dynamic loading, warming, and versioning of LLM weights, ejection protocols that gracefully remove misbehaving or underperforming nodes from the active pool, and network orchestration that maintains global consistency of routing tables, capability registries, and consensus participant sets. The AISP model transforms idle GPU capacity into monetizable inference endpoints without requiring operators to build custom infrastructure—they simply provide compute, and StackNet handles discovery, routing, billing, and quality assurance.

AISPs earn revenue through three primary execution channels. Inference earnings accrue on a per-token basis for both input processing and output generation, with rates dynamically adjusted based on model complexity, latency requirements, and current network demand—operators running larger models or achieving lower latency command premium rates. Agent earnings compensate operators for hosting persistent agent sessions that maintain state across multiple interactions; AISPs receive a session fee for agent instantiation plus per-step fees as agents execute reasoning chains, tool invocations, and sub-task delegations. Task execution earnings reward operators for completing discrete computational jobs including image generation, audio transcription, code execution, and document processing—each task type carries a base fee plus resource-proportional charges for GPU-seconds, memory utilization, and storage I/O. All earnings settle atomically via x402 payment channels: clients pre-authorize payment bounds, operators execute work, and funds transfer upon cryptographic proof of completion. The network maintains transparent fee schedules and real-time earnings dashboards, enabling AISPs to optimize their infrastructure allocation across inference, agent, and task workloads based on current market rates and their hardware capabilities.

StackNet introduces Imagination, a novel context primitive fundamentally distinct from traditional memory systems. While memory stores and retrieves past interactions, Imaginations are author-attributed, portable context seeds that proactively enrich inference contexts with domain knowledge, specialized instructions, or curated perspectives. Each Imagination carries cryptographic provenance linking it to its creator, enabling a new paradigm of attribution-first inclusion where content contributors are recognized whenever their Imaginations influence model outputs. The MetaModel and Imagination systems operate in concert: when an Imagination is injected into a context window, the system tracks which tokens derive from authored content versus user input versus model generation. This token-level lineage enables token utilization economics—authors receive proportional compensation based on how extensively their Imaginations contributed to valuable outputs. The result is a sustainable creator economy for AI context, where knowledge curators, domain experts, and prompt engineers can publish Imaginations to the network and earn ongoing revenue as their contributions improve inference quality across the ecosystem.

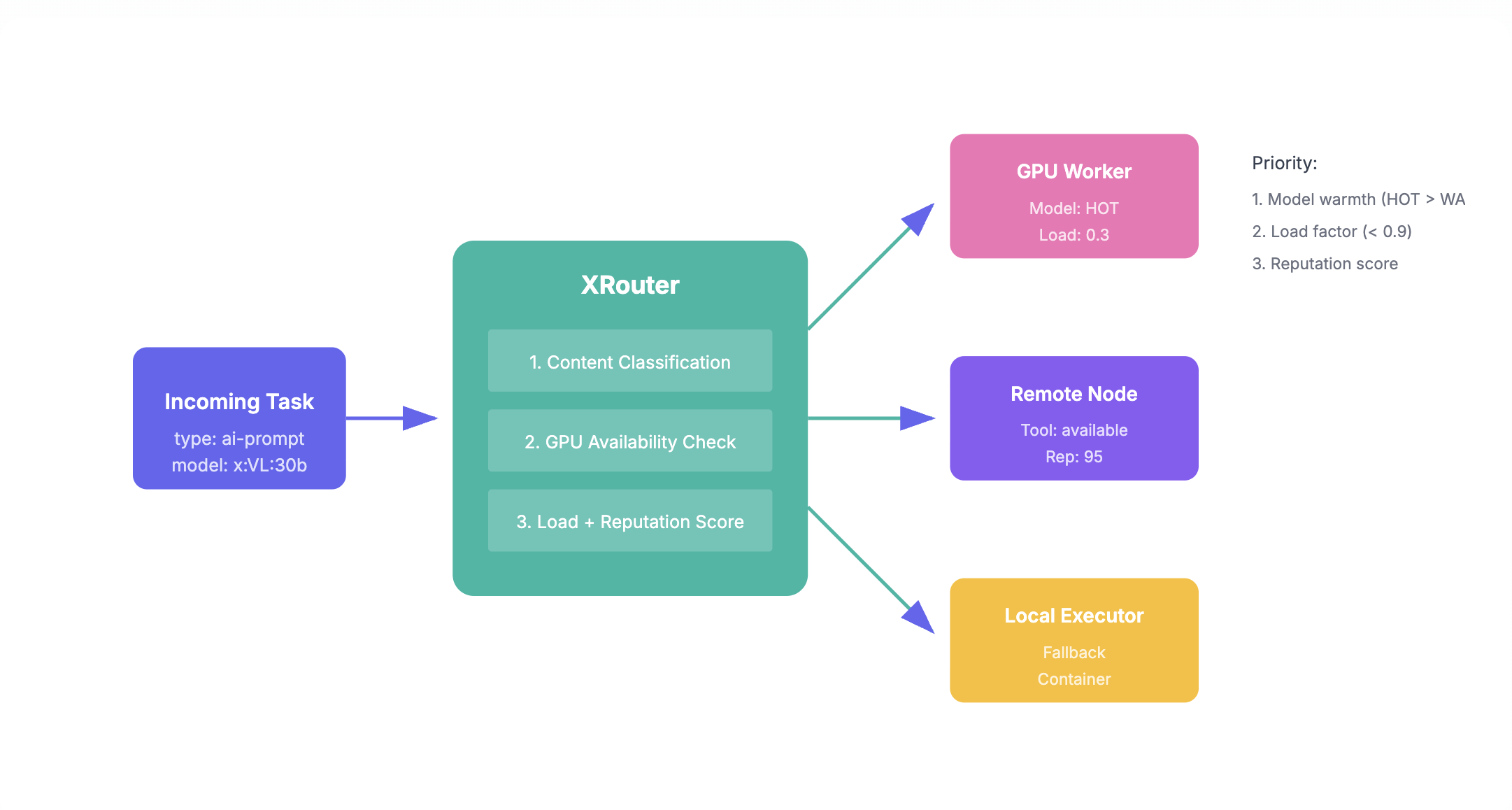

XRouter: Intelligent Model Selection

At the core of StackNet's task execution lies XRouter, an intelligent routing system engineered to optimize LLM inference by dynamically selecting the most suitable model for each query. Rather than defaulting to a single model or requiring manual selection, XRouter analyzes incoming requests in real-time and routes them to the optimal backend based on task complexity, cost constraints, and performance requirements.

XRouter employs a novel Chain-of-Thought guided Diffusion Transformer (DiT) architecture for complex routing decisions. When a query arrives, the system first generates an explicit reasoning trace using Chain-of-Thought prompting—decomposing the routing decision into discrete analytical steps: task classification, capability requirements, latency sensitivity, and cost-quality tradeoffs. This reasoning trace is then encoded as conditioning signals for the DiT, which models the probability distribution over routing outcomes. The diffusion process iteratively refines an initial noise vector into a routing decision embedding, guided at each denoising step by the CoT-derived conditions. This approach enables XRouter to capture complex, non-linear relationships between query characteristics and optimal model assignments that traditional classifiers miss, while maintaining interpretability through the explicit reasoning chain. The DiT's generative nature also allows graceful handling of novel query types by interpolating between known routing patterns in the learned latent space.

The router implements a comprehensive taxonomy of 9 routing strategies organized into three major categories. Stateless routing makes stateless decisions using techniques such as KNN similarity matching against sematic cache, SVM classification boundaries learned from labeled task-model pairs, and data trained on VAE/embedding representations. Tool routing (agent) extends this by maintaining conversation context, enabling more nuanced decisions as dialogues progress, handling complex tool-use scenarios where the routing decision depends on the anticipated chain of tool calls.

Advanced routing strategies include network-level agent co-processor augmented Elo rating systems that continuously update model rankings based on pairwise comparison outcomes, graph-based routing for resolving task dependencies and orchestrating multi-step workflows, BERT-based semantic routing that understands query intent at a deep level, and hybrid probabilistic methods that combine multiple routing signals through Bayesian inference. The system also supports transformed-score routers that normalize heterogeneous quality metrics into comparable routing scores.

StackNet exposes this routing intelligence through a unified interface supporting programmatic API access, command-line tools for training custom routing models, and interactive exploration. A complete data generation pipeline enables training from 11 benchmark datasets with automatic API calling and evaluation, allowing operators to fine-tune routing behavior for their specific workload characteristics.

XRouter achieves ultra-low-latency workload coordination through a compact bitmask broadcasting protocol. Each AISP node continuously publishes three 256-bit vectors: a resource bitmask encoding hardware capabilities (GPU memory tiers, VRAM availability, CPU cores, network bandwidth classes), a capability bitmask representing supported modalities and models (text, vision, code, TTS, ASR, specific model families, quantization levels), and an availability bitmask indicating current load state across execution slots. These bitmasks propagate through the P2P gossip layer with sub-10ms update latency, enabling every node to maintain a real-time view of network-wide capacity. When a task arrives, XRouter performs bitwise AND operations between the task's requirement vector and cached node bitmasks to instantly identify candidate executors—no round-trip queries required. This approach reduces routing decisions to nanosecond-scale bit operations, enabling XRouter to evaluate thousands of potential execution targets in microseconds and achieve consistent sub-millisecond routing latency even as the network scales to thousands of AISPs.

Results

Image PolyModal Pipeline

Seed upscaled character conditioned iterative shot synthesis using diffusion models via latent concatenation and negative RoPE shifts with LoRA fine-tuning. Execution distributed across 4 Operator nodes, 3 Models, 2 MetaModel invocations

Audio PolyModal Pipeline

Text to audio synthesis with lyrics, augmented style metadata guided Diffusion Transformer (DiT) Chain-of-Thought. Execution distributed across 1 Operator node, 1 Model, 3-4 MetaModel invocations per stepLyrics

Lyrics

Framework & Application